Ricochet is the best place on the internet to discuss the issues of the day, either through commenting on posts or writing your own for our active and dynamic community in a fully moderated environment. In addition, the Ricochet Audio Network offers over 50 original podcasts with new episodes released every day.

An Important Piece of Evidence That Argues Against America’s “Great Stagnation”

An Important Piece of Evidence That Argues Against America’s “Great Stagnation”

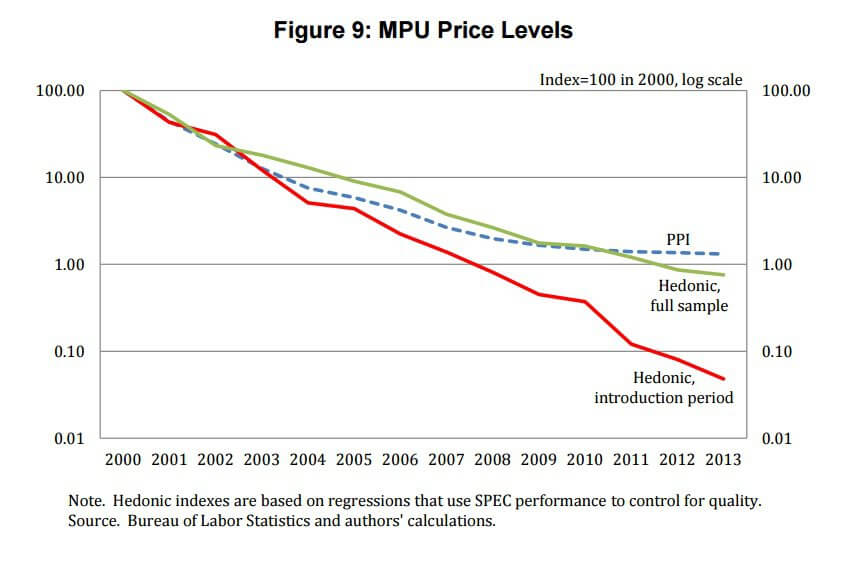

It’s an economic puzzlement. The US producer inflation index suggests computer chip prices have been flattish in recent years after a rapid decline from the mid-1980s through the early 2000s. Yet there is also evidence that microprocessor performance has continued to improve. Given the apparent relationship between declining chip prices and the pace of innovation, it would be really bad news if the slowing pace of price declines means innovation is slowing too. And really bad news for the overall economy. After all, semiconductors are “general purpose” technology behind advances in areas such as machine learning, robotics, and big data. As researchers David Byrne, [AEI’s] Stephen Oliner, and Daniel Sichel explain in “How Fast are Semiconductor Prices Falling?”:

It’s an economic puzzlement. The US producer inflation index suggests computer chip prices have been flattish in recent years after a rapid decline from the mid-1980s through the early 2000s. Yet there is also evidence that microprocessor performance has continued to improve. Given the apparent relationship between declining chip prices and the pace of innovation, it would be really bad news if the slowing pace of price declines means innovation is slowing too. And really bad news for the overall economy. After all, semiconductors are “general purpose” technology behind advances in areas such as machine learning, robotics, and big data. As researchers David Byrne, [AEI’s] Stephen Oliner, and Daniel Sichel explain in “How Fast are Semiconductor Prices Falling?”:

… adverse developments in the semiconductor sector could damp the growth potential of the overall economy. On the other hand, if technological progress and attendant price declines were to continue at a rapid pace, powerful incentives would be in place for continued development and diffusion of new applications of this general-purpose technology. Such applications could both enhance the economy’s growth potential and push forward the ongoing automation that has generated concerns about job displacement.

But the puzzle may been solved. Byrne-Oliner-Sichel point out that in 2006, Intel began to dominate competitor AMD such that by 2013 AMD effectively “had been relegated” to the bottom end of the market. And with less competition, Intel changed its pricing structure by keeping list prices constant — “maybe attempting to extract more revenue from price-insensitive buyers” — while offering discounts to some customers on a case-by-case basis. And such “price discrimination could reduce the information content of its posted list prices, potentially biasing the quality-adjusted indexes generated from these prices,” the researchers conclude. Indeed, there may also be price measurement problem with the computing equipment that use these microprocessors. The slowing decline in their prices is also a part of the argument that US innovation is stagnating. Here is the bottom line [also refer to the above chart from the study]:

Published in EconomicsThe results from our preferred hedonic price index indicate that quality-adjusted MPU prices continued to fall rapidly after the mid-2000s, contrary to the picture from the PPI. Our results have important implications for understanding the rate of technical progress in the semiconductor sector and, arguably, for the broader debate about the pace of innovation and its implications in the U.S. economy. Notably, concerns that the semiconductor sector has begun to fade as an engine of growth appear to be unwarranted. Rather, these results suggest continued rapid advances in technologies enabled by semiconductors.

As someone who majored in economics, it might help if you explained what ‘MPU’ and ‘Hedonic’ mean in this context, as I am unfamiliar with these terms.

MPU Microprocessor unit

Hedonic Index/pricing/regression- Basically it just takes internal and external characteristics into account when calculating the price. A good example is a house. You don’t just look at square footage, appliances, and color; you also consider location, neighborhood, school district, and the local market. All of those together create the price. I’m not sure what all would go into the Hedonic pricing for MPU’s, but I believe that is what is meant.

Moore’s Law still applies to Semiconductors, but not necessarily anything else.

This is sometimes called Moore’s Curse.

So if I understand this right, and someone please clarify if not…

The gradual decline in MPU’s means that innovation is slowing and is a possible sign of stagnation. Innovation would lead to a more rapid decline in the price.

However, Intel, which owns a large share of the market, has kept prices higher than the market may demand not because a lack of innovation, but in an attempt to win additional profits from price-insensitive buyers.

Thus innovation may be moving along at an agreeable pace, the pricing just isn’t a true reflection of that.

In an effort to take account of the rapidly increasing power of microprocessors the Fed developed ‘hedonic price indices’. These are constructed to keep quality ( ie processing power ) constant.

To extend the housing example…

The average house built today is much larger than that built in 1950. So, if we are looking at a series of average house prices since 1950, part of that house price change is really just the increasing size of the houses over time. So we might perfer to look at price per square foot to account for that.

Or think of looking at a time series of car prices as price per horsepower. Something like that.

They try to do the same for microprocessor prices. Think of the hedonistic price as price per unit of processing power.

Hi SEnkey. What you just described is how I take it as well. And in terms of price per unit of processing power, that is still going down.

I hope it’s just me, but instead of a graph I’m seeing a pixelated Troy Senik.

Hardware innovation is not necessarily tied to software innovation, and there still appears to be room for both where the Internet is concerned. It is a babe in arms.

Hardware is running into some hard limits where CPUs are concerned, but others are still going strong.

Of course, everyone’s favorite business partner, Big Brother, is firmly moving his FCC drag coefficient into place to smother that bright and shining star of innovation.

From health care to the Internet to the PIIGS, the real innovation today is in surviving the destructive destruction of the ham fisted graft grabs of the visible hand.

I’m not really sure this is the case. It’s true we aren’t seeing the advances in clock speed that we saw a decade ago, but that’s mostly a because higher than about 3.5 GHz you run into walls of of power inefficiency and skyrocketing heat envelopes.

We’re still seeing huge advances in transistor count, so manufacturers are moving towards massively parallel designs. The newest Xeon Processors have 18 Cores. Yes, 18. I can’t even imagine the kind of processing that a system equipped with 4 of these bad boys could do. All in the same thermal and power envelope that was standard 10 years ago.

Each of them can address up to 768 GB of memory, or up to 3 TB of RAM for that same 4 socket server.

Solid state storage went from piddly 4 MB USB sticks to 2+ TB SSDs over the last decade on the consumer market

nVidia’s new Titan X GPU uses 3072 CUDA cores and is capable of

336.5 Gigabytes per second of memory throughput.

The big push lately has actually been power efficiency, with manufacturers looking at ways to make mobile devices run longer and data centers greener. If a processor has 18 cores and nothing to do, odds are pretty good that 17 of them are shut off entirely, while the last one is in a lower C state waiting to wake a few of the others up in a split nano-second if work should arrive.

Thank you for clarifying that, I was genuinely confused. It makes much more sense now as did your other comment.