Ricochet is the best place on the internet to discuss the issues of the day, either through commenting on posts or writing your own for our active and dynamic community in a fully moderated environment. In addition, the Ricochet Audio Network offers over 50 original podcasts with new episodes released every day.

Is Micro-Plagiarism a Thing?

Is Micro-Plagiarism a Thing?

I have a friend who once developed an original axiom that explains the entire internet search industry and, not incidentally, the stubborn existence of cluttered desks. I’ve always called it, “Leeke’s Law“:

If you can find something fast enough, there’s no reason to organize.

Early in the days of the internet, there was a push to manually tag all the content people were creating to make the information on the internet easier to organize and find. But that effort, though not gone entirely, was ultimately defeated by Google, et al., because people could, in general, find what they were looking for without going to the trouble of explicitly organizing all their data.

What computers are good for, besides doing math, is performing repetitive micro-tasks, and doing those tasks faster than any human being would or could possibly do them.

If you’ve been reading anything online of late, you have probably come across some reference to ChatGPT. ChatGPT is an online service created by the folks at OpenAI. It presents a chat-like human interface to access an underlying AI model trained using billions and billions of text documents. The AI model has the ability to generate coherent text in response to text input from a user. So you can, for example, ask it to write a limerick about some subject. And, lo and behold, you’ll get back a limerick.

As it happens, ChatGPT is maxed out on users at the moment, but I’m a paying OpenAI user, so I have some access to the underlying text model without going through the chat interface.

This morning, I decided to play around with the text model and asked it to do the following:

Write a sermon on substitutionary atonement.

Here is what I got back:

My dear brothers and sisters in Christ,

Today I would like to talk to you about the doctrine of substitutionary atonement. The doctrine of substitutionary atonement is the belief that Jesus died on the cross as a substitute for us, taking upon himself our sins and the punishment that we deserved. This doctrine is based on the teachings of scripture and it is a fundamental belief of Christianity.

The Bible teaches that we are all sinners and that the penalty for sin is death. We are unable to pay for our sins and so, God sent his son Jesus to be our substitute. Through his death on the cross, Jesus paid the penalty for our sins and thus made a way for us to be reconciled to God. Jesus died in our place, taking upon himself the punishment that we deserved, so that we could be forgiven and have eternal life.

The doctrine of substitutionary atonement is a profound and beautiful truth that has been integral to the Christian faith for centuries. It is a reminder of the incredible love and grace of God. We can look to the cross and be reminded of the great sacrifice that Jesus made for us out of love. We can be comforted in knowing that, through the death of Jesus, our sins are forgiven and…

What is fascinating, and even a little unnerving, about these emerging AI models is their ability to produce coherent textual responses to almost any question.

As I’ve written about before, they accomplish this by ingesting a massive corpus of text, far more than has been feasible until just this moment in technological history, and they compute subtle statistical associations observed within the corpus of text. This facilitates the ability to draw from the statistical model and regurgitate a body of text that is uncannily coherent and responsive to a user’s input.

A large amount of digital ink has been spilled by people beginning to wring their hands about the implications of ChatGPT within education. After all, if trained AI models can generate coherent essays (narrator: “they can”), how will anyone ever know if a student paper is original?

I want to raise a larger but possibly more thorny question: what is plagiarism in the 21st century?

I raise this question because my understanding of these text models is that they do not produce anything original (e.g., they don’t invent new words) but, rather, they create statistically informed recombinations of fragments of the text within the documents used to train them.

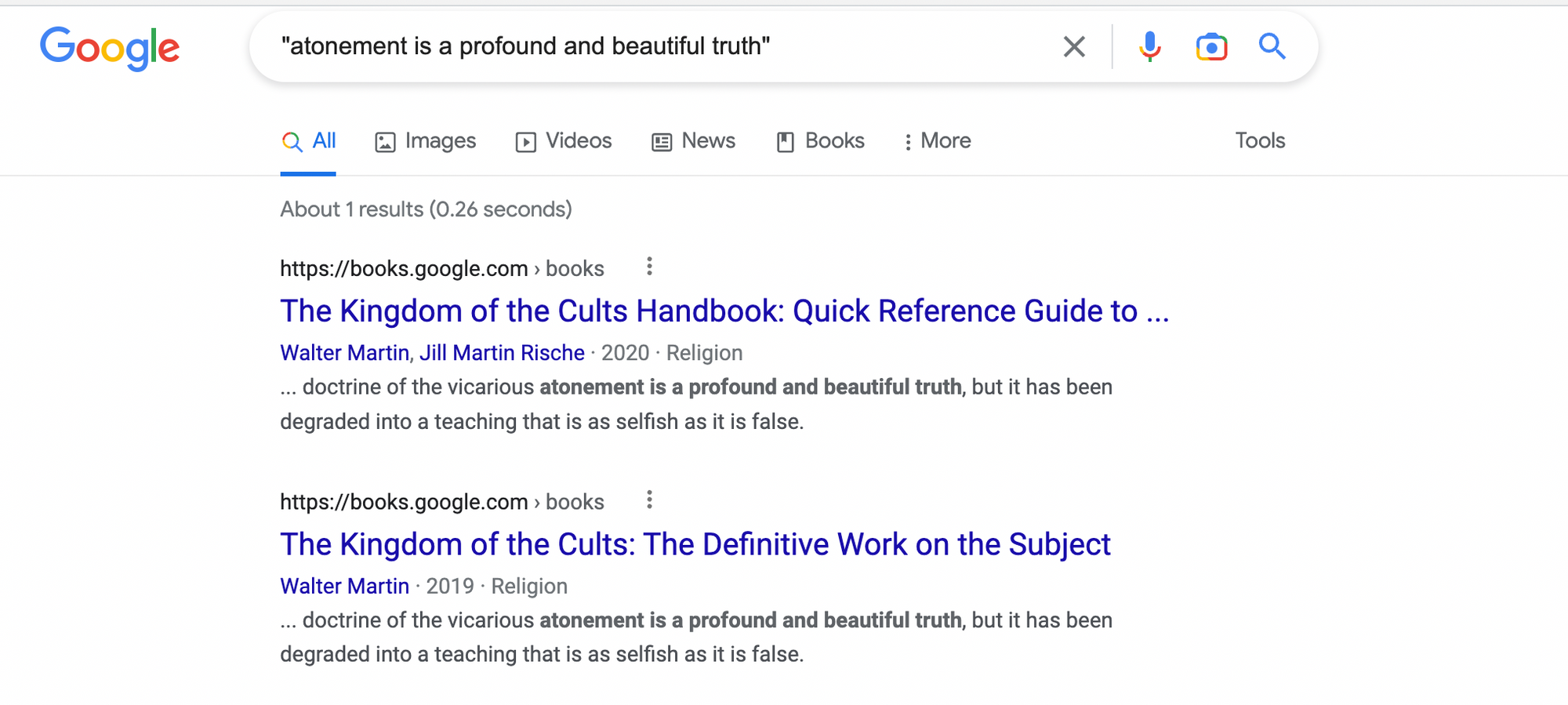

Let me illustrate with the text of the AI-generated sermon above. I decided to take phrases that stood out to me from the text and search for those phrases using Google. The phrase I chose to search for was “atonement is a profound and beautiful truth.”

Here are the Google results I got back:

As you can see, this exact phrasing in regard to the atonement is contained within a book, indexed by Google Books, called The Kingdom of Cults Handbook.

So this raises more than a few questions for me. Most notably, are these textual AI models really just a form of micro, or fine-grained, plagiarism?

Historically, plagiarism has been understood to be the uncredited use of the written work done by someone else. But historically, humans have not possessed an incentive to piece together a plagiarized document from tiny pieces of many different sources. It is less labor-intensive just to write the document oneself than to plagiarize in tiny fragments. But recall the comment I introduced this post with — computers are really, really good at doing repetitive micro-tasks faster than human beings.

Lots of people are wondering whether people will use ChatGPT in lieu of doing their own work. But what if ChatGPT is itself just a giant digital plagiarist? Who, at the end of the day, actually owns the individual fragments of text within those documents that ChatGPT is mining and re-ordering into its responses?

What is the granularity of uncredited text, within a document, that makes the producer of that document guilty of plagiarism?

This is not a rhetorical question.

Published in General

And who really wrote this post, Keith? ;-)

This really made me laugh.

This is a very interesting post. The first thought that comes to mind is that the smaller the bit being copied, the more defensible it is that someone could independently come up with this one phrase or that the phrase is not actually original to the author where it was found. I could imagine teaching Sunday school and making that exact statement, or something very, very similar to it.

Plagiarism becomes more obvious when a series of phrases get re-used in a similar order or to illustrate a similar thought in context. This piecemeal “stealing”, from a legal standpoint at least, would probably not hold up very well in court. If in a 10,000 word essay, the computer found 30 specific phrases from 30 separate sources, most/all of which are pretty ordinary turns of phrases, what’s the harm to the original author, whose original work was itself a 250 page book? It would seem likely that more than one author in the past has used the phrases involved (or ones nearly like them), which would defeat a claim of plagiarism.

Very interesting post, indeed.

What about a near neologism that has caught on. I remember reading an essay by Chuck Palahniuk stating that he coined the insult “snowflake” to mean a psychologically ego-centric, emotionally fragile young person: “You are not special. You’re not a beautiful and unique snowflake. You’re the same decaying organic matter as everything else. We’re all part of the same compost heap. We’re all singing, all dancing crap of the world.”

Would using the single word “snowflake” as an insult referring to fragility and a false sense of specialness be plagiarism, too?

This is essentially the question I’m asking. At what point is something being plagiarized? Justin Other Lawyer points out the legalities and monetary implications. Which is interesting to be sure. But I’m also interested in the moral/ethical issues, both of using such technology, and participating in its implementation. I think the collegiate professoriate is kind of freaking out right now about students having access to this. Which is understandable.

On the other hand, I indirectly know a marketing guy who is saving himself many hours a week of writing marketing prose by using this technology. So there’s a happy productivity angle I guess.

Something I didn’t bring up is whether or not this will, at a macro/societal level, generally diminish the volume of actual learning that takes place, or human insights generally. (Not unlike the way the emergence of social media has coincided when a slowdown in productivity gains.)

I’m far from expert in this field, but I would suspect that “snowflake” used this way would not be plagiarism. The word has been used for generations (at least I think so–not bothering with research at this point) to mean something delicate and unique. To use it pejoratively to mean weak or to point out that the person was NOT unique does not seem to me to be terribly unusual. That’s why plagiarism litigation usually involves lifting multiple sentences/paragraphs and, at most, rearranging them into a slightly different order but keeping the original context.

I once had a law prof who did much writing who often said (to paraphrase), “No one has an original thought–we’re all just taking other people’s thoughts and rearranging them in new ways”. That seems about right.

I don’t know how plagiarism is defined legally. It seems it might be somehow related to text having commercial value. Would this mean that any text copied from larger text that is demonstrated to have commercial value would constitute plagiarism?

Just trying some ideas to respond to your question.

From the user end, this is a tough call as to whether it’s a breach of ethics. One clear case to me would be that of the undergraduate student charged with writing a research paper on a topic. It would seem that the student has a moral obligation to give attribution to the AI program for the result. Any student handing in a paper “written” by the computer should disclose that he did nothing more than edit the paper. Thus, he would deserve a 0 (or, perhaps a slightly higher failing grade if the editing was first-rate) because he did none of the writing or researching.

As for the marketing guy who uses AI for his promotional material, he probably has a moral obligation to make sure the phrases are in common use and boilerplate “fluff”, but I don’t see that he has an obligation to put footnotes on his presentations unless he knows a particular phrase or thought was original to another author.

Who’s the producer here? Is it you @keithlowery?

Three or four immediate thoughts.

One, size matters to plagiarism.

Two, phrasing and memorability matter. JFK’s “Ask not…” was a rephrase of several nearly identical phrasings of that same thought stated by others for maybe a century past. But it was the best, most memorable phrasing. But if anyone used it now, it would be trite in that everyone knows he’s copying, but not necessarily be thought to be plagiarizing.

Three, I myself have a few times cut and pasted a seven or eight word phrase from wikipedia as my own, but it was not special, it was in itself simple and generic, it was almost exactly as I would have phrased it myself, and it using it saved me the time of rewriting the syntax of my sentence and perhaps my own paragraph just to use a different phrasing.

Four, yes, if can computers can do it better, and if we only copy what computers have composed, we will certainly lose the faculty to be intellectually creative, and maybe even lose our ability to clearly generate our own thoughts.

Oh, and one other thing. Billy Crystal once got upset that every news man on TV was saying “Youuu look mahvelous!” He said pretty close to this: They shouldn’t be saying that. I wrote that, it’s mine, I make a living with that!

I guess I would say the AI model, in this discussion, is always the producer of the questionable content. The user is merely a purveyor (accessory after the fact ? — haha!)

And one more thought about originality. Mark Twain once said that the difference between the right word and the almost right word is the difference between lightning and a lightning bug.

So I would guess that size, novelty, memorability and brilliance all matter. The paragraph that you showed that ChatGPT generated was unremarkable, except perhaps for the comment “The doctrine of substitutionary atonement is a profound and beautiful truth” which I would say was plagiarized.

There is a difference between plagiarism and plagiarism within a legal framework.

I don’t like this.

However, ChatGPT could be used to detect plagiarism. One could paste in a politicians speech and ask the AI if any of it had been copied from an earlier source.

I’m think more along the lines of Keith’s comments about:

Keith Lowery (View Comment):

the moral/ethical issues

Keith Lowery (View Comment):

this will, at a macro/societal level, generally diminish the volume of actual learning that takes place, or human insights generally

Finding something on a cluttered desk reminded me of our accountant at our last location which I want to use for another year. His desk, office and every inch of space within his chair looks like the paper equivalent of the Big Bang. Yet, he knows where everything is. He works with his shoes off, and multi-tasks. Everyone uses him, especially the self-employed. He’s really brilliant.

The other thing that struck me was the content that you chose as an example and the AI response, listing it as a cult. I imagine this writing of code will and has already coded many aspects of Judeo-Christian works under strange headings, as well as the classics etc.

I can’t count the number of times I put something somewhere “safe”, and never saw it again.

I don’t think anyone should get bothered by others using the same or very similar short phrases (what is being identified here as “micro-plagiarism”), at least when done by a human writer or speaker. While most languages have a lot of words, the number of words is not unlimited, and if people keep making up new words we have trouble communicating. There are also some limits to the number of ways a few words can be put together to express a particular idea. So, I think it almost inevitable that people will use the same or very similar short phrases to express a given idea (and no one has a moral or legal exclusive right to an idea). I suspect when I speak or write I unconsciously use phrases I have read or heard elsewhere. Taking multiple sentences or wholesale copying of story structure or other large scale copying is wrong (morally, and, in larger instances, legally).

In a corollary, there was an effort by some musicians several years ago to claim that using even a tiny fragment of music (like three or four notes) that was identical to an earlier piece of music was impermissible stealing (copyright infringement in legal terms). I think most of the court decisions eventually concluded that such claims had to fail or it would become too hard to create new music, as all new music has some copying or mimicking of bits of older music.

My late father, professor of engineering, liked to say, “Stealing from one source is plagiarism; stealing from multiple sources is research.” Said with a very pretentious pronunciation of “research.” My father did not think highly of much of the research he encountered.

I haven’t read a lot about “artificial intelligence” generating text, but from what I have read computer-generated text tends to sound somewhat wooden to a human ear. Wall Street Journal columnist Joanna Stern wrote a fun column a couple of weeks ago about successfully using artificial intelligence generated texts in a pretend return to high school.

I did some experiments with ChatGPT, reported here.

Serious concerns have been raised about the way this kind of system can be used to influence the political climate, and for outright censorship. Discussion and more experiments, here. Note the absolute refusal of the system to construct an argument for fossil fuels as a benefit to humanity.

Marc Andreessen, in early December, said:

Seriously, though. The censorship pressure applied to social media over the last decade pales in comparison to the censorship pressure that will be applied to AI.

“AI regulation” = “AI ethics” = “AI safety” = “AI censorship”. They’re the same thing.

The level of censorship pressure that’s coming for AI and the resulting backlash will define the next century of civilization. Search and social media were the opening skirmishes. This is the big one. World War Orwell.

Kingdom of the Cults is a Christian book that identifies sects that essentially don’t follow orthodox Christian practice, thought or cosmology. The selected phrase was probably a defense of substitutionary atonement, which was denied by one cult or another.

Some years back I wrote a description of a twisting Texas back road (FM337) for an article in a member magazine put out by a motorcycle group to which I belong. I said that the road “rips up and over huge limestone karsts”. It was (IMHO) a satisfying turn of phrase. Over the next few years I saw that exact wording several places on web sites as well as one national magazine. It just made me smile. Was it plagiarism or did people just say “that’s it!” and adopt the phrasing?

I was thinking about what the results would be on something that should be fact based, the Covid pandemic as an example, but where the sources had been censored in ways that only made one side of the discussion available like we have just experienced.

Probably both.

In the column by Joanna Stern I noted earlier, she did find that the artificial intelligence “writer” introduced a factual error into the essay, but she didn’t explain how that error arose. Presumably the sources used by the aritificial intelligence software included the error. So yes, if the records have been censored to exclude correct information and promote false information (as we know has happened over the last couple of years), future essays will perpetuate that incorrect information. But that will be true even for human writers, as they too will be relying on today’s incorrect and biased sources.

Should we say ‘some’ human writers?

In the case of ChatGPT’s refusal to make an argument for fossil fuels, I don’t see how the refusal could have been simply a matter of bias in the knowledge base. The language ‘It goes against my programming to generate content that promotes the use of fossil fuels’ seems that it must have been put there by explicit human decision.

Yet when Alex Epstein (author of a couple of books which are pro-fossil-fuels) raised this issue with Sam Altman, who runs OpenAI, the response was that it was ‘inadvertent’. I don’t see how, but I hope so.

Altman seems like a pretty pro-free-speech guy…a couple of days ago, he tweeted:

colleges prioritized making people feel perfectly safe over everything else and produced a generation afraid to fail, and thus afraid to take risk, and thus on pace to accomplish extremely little

…I don’t see how these attitudes and the behavior of his product co-exist.

So if two AI’s were asked the same question and they undoubtedly came up with the same response would the second AI be responsible for plagiarism? How many consecutive words are deemed plagiarism anyway?

This post is terrifying. Mix verbal AI’s with the deep-fakes and you could probably make Biden sound intelligent.

I’ve been paying some attention to ChatGPT. Do not trust anything technical or scientific it spits out. It produces astonishingly convincing “explanations” and “code samples” that, if you actually already know the material, are utterly absurd.

It is a language model. It doesn’t actually understand how the world works.

I was mentally giving you heaps of credit, Keith, for your understanding of this Chat gizmo and for your bringing forth an idea or two about AI.

Ah, Keith knows a lot more than I do about AI, I think.

But then you went and accused AI of plagiarism.

Was that a wise move on your part?

Or not, Keith?

AI does not appreciate accusations or scorn.

If you remember the Colorado smart meter crowd, their utilities like heat and Ac were suddenly rationed.

For further reference, of the danger that might await you, I must link you to the flick “2001”

https://www.youtube.com/watch?v=qDrDUmuUBTo